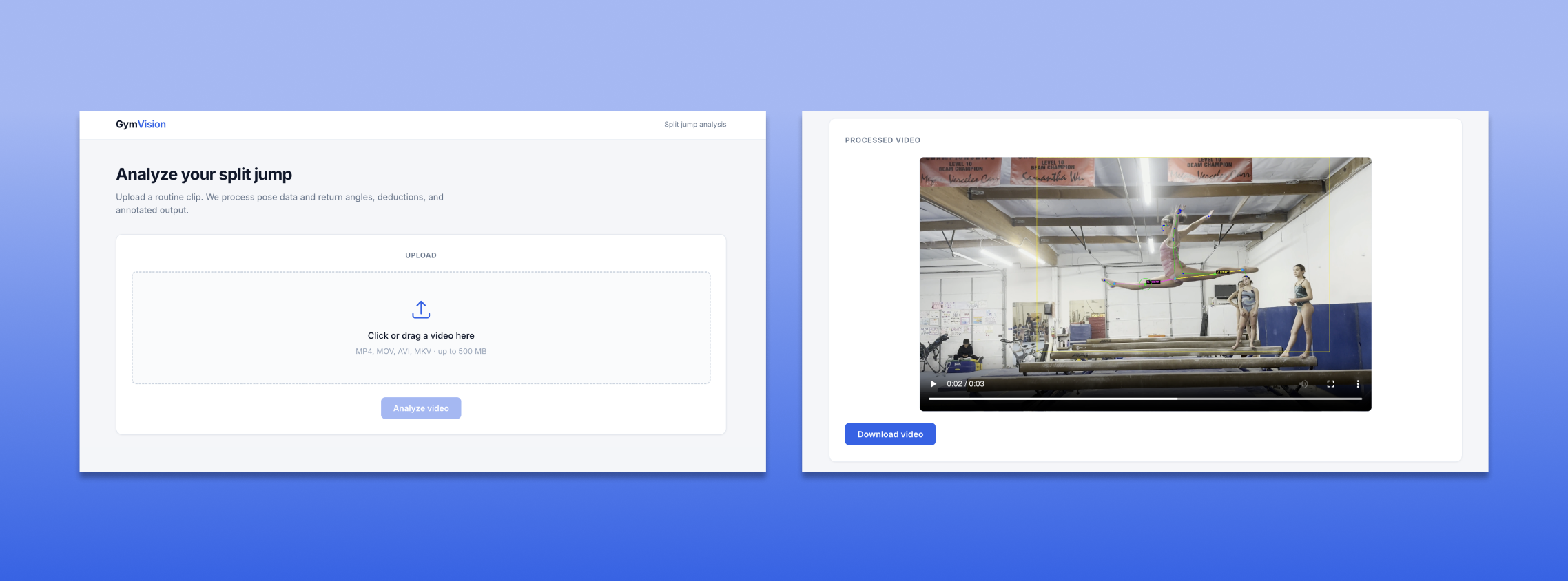

Problem: Multiple people on screen confused pose detection. For example, in competition footage, multiple people (athlete, coach, judges, spectators) can appear in frame, which can cause the model to lock onto the wrong pose.

Approach: I developed a multi-factor selection system to consistently track the correct athlete. Instead of relying on a single heuristic, the model evaluates movement, position within the frame, pose visibility, and detection quality to identify the most likely subject.

Key Insight: Simple heuristics (like choosing the largest person) were unreliable. Combining multiple signals led to more stable tracking across frames.